Network performance measurement

Contents

Network performance is often measured by using simple to use measure utilities like sending a PING and checking its RTT (Round Trip Time) or by performing a throughput test using a common used protocol like TCP or UDP. Often this gets mistaken for the actual response time of a single component such as a server (e.g. the host that gets PINGed) as not all runtimes of all components of a network can easily be measured or are simply overlooked as a potential cause of introducing a noticeable latency.

This article describes how to measure the latency for a single component in a network such as the latency introduced by a single PHY as well as how to measure the response time (application processing time) of a target/host for example for a PING.

Measuring the target response time

The components that have the most relevance in terms of latency are the MCU (as a whole system, mainly CPU + memory + Ethernet MAC unit) and application processing time (performance of the network stack) that it takes to respond to a request. The following describes how to measure the response time for a PING (ICMP protocol) packet of 64 bytes (minimum Ethernet packet size including 4 byte FrameCheckSum (FCS)). The following setup is used:

Hardware requirements

- emPower eval board with NXP Kinetis K66 running @168MHz that gets PINGed. This is referred to as RESPONDER.

- emPower eval board with NXP Kinetis K66 running @168MHz that sends a PING. This is referred to as INITIATOR.

Using an embedded target is optional as the initiators performance of sending the PING-request is not of relevance for the result of this test. - Direct cable connection between INITIATOR and RESPONDER to eliminate other network traffic.

- Oscilloscope with 4 channels

INITIATOR software setup

As the INITIATOR is not part of the response measurement, any host that initiates a PING-request to the RESPONDER is sufficient.

RESPONDER software setup

The following software setup is used to guarantee an optimized and stable test environment for the measurement:

- RTOS: embOS Cortex-M Embedded Studio V5.10.1.0

- Network stack: emNet V3.40a with Kinetis NI driver

- IDE/compiler: Embedded Studio V5.10 with SEGGER compiler and optimization level 2 for speed

- Software configuration: A regular release configuration (embOS 'R' library configuration) is used with the following modifications aside from defaults:

- emNet:

- IP_ConfigDoNotAddLowLevelChecks() is used which saves approx. 800ns. The low level checks are on by default to reduce CPU load for (broadcast) packets that are not for us and would get discarded anyhow later on. The timing for these low-level checks is subject to change based on services started on the target (e.g. open UDP sockets for a DHCP client) and are therefore excluded from the test.

- GPIO#1 set->clr is used to signal the execution of the Rx interrupt for the received PING-request packet.

- GPIO#2 set->clr is used to signal that the send logic of the Ethernet MAC for the PING-response has been started.

- emNet:

Measurement setup

The target response time (to a PING) can be dissected into the following measurements:

- The time between the Ethernet MAC completely receiving the PING-request from the cable/PHY and signaling the Rx interrupt once the complete packet is ready to be processed by the Ethernet stack.

- The time it takes to evaluate the received PING-request in the stack and queue a PING-response in the driver for sending.

- The time between the Ethernet stack starting the transmit of the PING-response and the Ethernet MAC sending the data out to the PHY/cable.

To measure these sections, an Oscilloscope with 4 channels is required. The channels in this example are set as follows:

| Ch# | Color | Label | Description |

|---|---|---|---|

| 1 | Yellow | RxPhyMac | Transfer from PHY => Ethernet MAC and MCU RAM. As this point is easier to measure (no link up detection signal, etc.), the PHY latency (approx. 235ns for a Davicom DM9161A) between cable => PHY is ignored. |

| 2 | Green | RxInt | GPIO#1 set->clr signaling the Rx interrupt handler getting executed. |

| 3 | Orange | TxGo | GPIO#2 set->clr signaling the response has been queued for sending (typically queued in the Ethernet DMA) and the start transmit command has been given. |

| 4 | Blue | TxMacPhy | Transfer from MCU RAM and Ethernet MAC => PHY. As this point is easier to measure (no link up detection signal, etc.), the PHY latency (approx. 235ns for a Davicom DM9161A) between PHY => cable is ignored. |

Fast-Ethernet (100Mbit) response time for a 64 byte PING packet

For our measurement we use the smallest possible Ethernet packet size allowed by the Ethernet IEEE 802.3 standard which is 64 bytes including the FCS. This gives us the worst header/payload ratio but at the same time the best impression of the actual response time that is more or less static for each packet of any size.

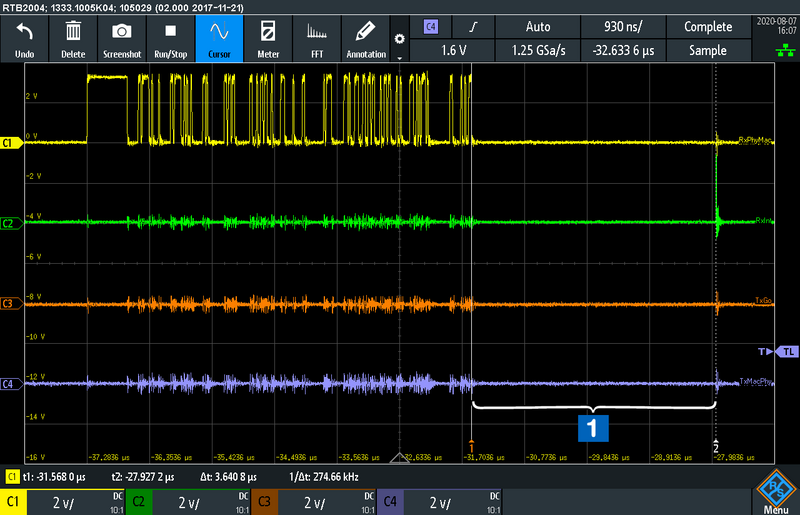

The following screenshot shows the response measurement for a PING-request packet of 64 bytes:

The overall reaction time to a PING therefore is ~31.55us.

For a more detailed view on what is going on, the following 3 measurement sections that can be seen on the screenshot above are described separately:

| Section | Description |

|---|---|

| Latency between fully receiving a packet from the PHY => Ethernet MAC and until it is stored in MCU RAM and signaled ready to be processed via the Rx interrupt. To be ultra-precise the PHY latency (approx. 235ns for a Davicom DM9161A) between cable => PHY would need to be added on top. | |

| Actual response time of the Ethernet stack for evaluating the packet and preparing/queuing a response. | |

| Latency between queuing a packet for sending with the hardware from MCU RAM and Ethernet MAC => PHY. To be ultra-precise the PHY latency (approx. 235ns for a Davicom DM9161A) between PHY => cable would need to be added on top. |

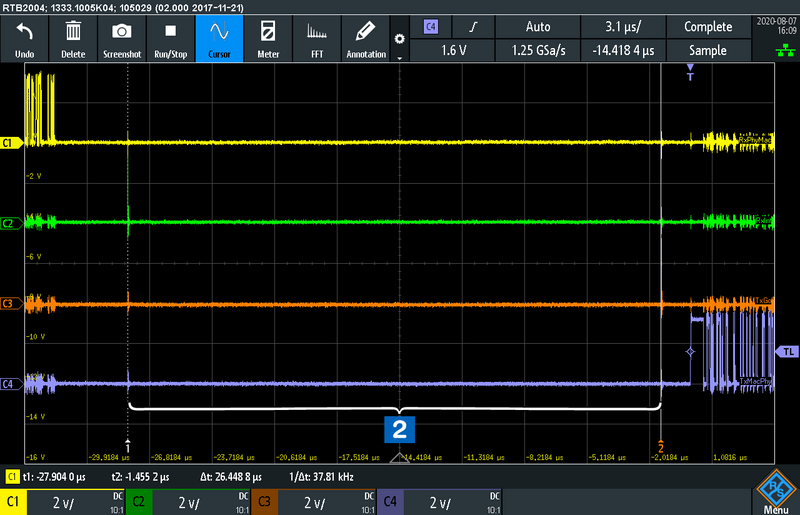

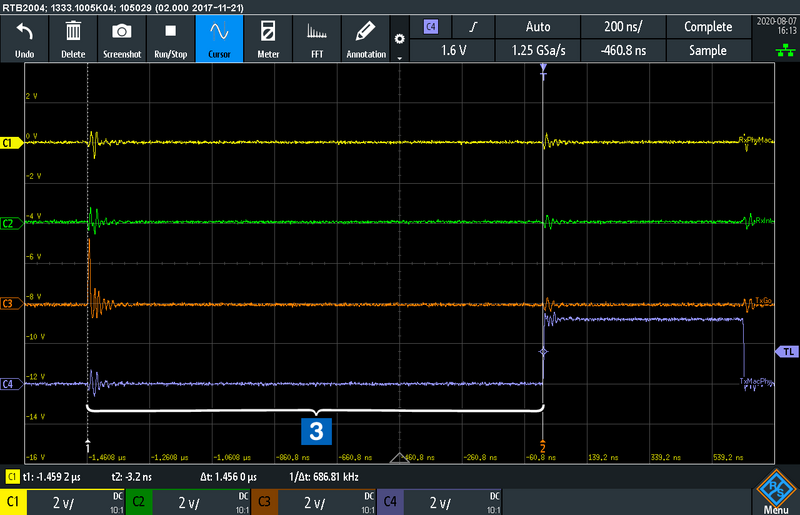

Rx hardware latency

The first section of the measurement shows the time it takes from the last bit sent from the PHY => Ethernet MAC, storing it into MCU RAM via DMA and signaling the reception of a packet via interrupt (signaled to the scope via set->clr GPIO#1 at the beginning of the interrupt handler).

Application response time

This section of the measurement shows the actual time for the software to evaluate the packet as Ethernet/IP/ICMP packet and queue a response with the Ethernet DMA to send back an answer (signaled to the scope via set->clr GPIO#2 after starting the Ethernet DMA).

Receiving and handling a packet in software involves different steps. In a simple overview for a PING-request these are:

- Receiving the Ethernet frame into a packet buffer usable by the network stack.

- Evaluating the received packet on various protocol layers and check for errors, caused due to actual problems or malicious exploit attempts. For PING these protocols/headers are Ethernet, IP and ICMP.

- Creating a PING response for a valid request and sending it back.

Tx hardware latency

The third and last section of the measurement shows the time it takes once the packet has been queued for sending (signaled to the scope via set->clr GPIO#2 after starting the Ethernet DMA) with the Ethernet DMA and the hardware starting to shift out the first bit of the packet from Ethernet MAC => PHY.

Fast-Ethernet (100Mbit) response time for a 64 byte TCP packet

Compared with the more simplistic ICMP protocol used for a PING, the TCP protocol involves more complex logic in terms of checks and calculations due to its nature.

The same measurement setup as before has been used to measure the response time for a TCP packet with a 1 byte payload. While the size of headers plus payload and FCS is less than 64 bytes, the packet is automatically padded to the Ethernet minimum size of 64 bytes. The hardware specific runtimes between PHY<->MAC remain almost the same and are therefore not subject of further analysis. Their runtime however might vary slightly due to the TCP header being larger in size and its checksum calculation being more complex, compared with the header checksum of the ICMP protocol.

To measure the TCP response time, a simple TCP echo sample (IP_TCP_Echo_Server.c/IP_TCP_Echo_Client.c) is used. By default the sample uses the BSD Socket API recv() and send().

Using BSD Socket API

The application response time is ~83.77us when using the generic BSP socket API. This involves at least one additional memcpy() operation compared to the PING case as received data is presented via the Rx socket buffer, instead of directly handing over the packet data to the application via a callback. The same applies for sending back via socket API.

Using TCP zero-copy API

While sending basically requires using a socket buffer to retain the TCP data until it is acknowledged by the receiver, emNet offers a TCP zero-copy API to reduce the overhead on the local receiving part. The TCP echo sample supports switching the test case by changing a single define in the configuration section of the sample.

The application response time can be brought down to ~50.12us using the zero-copy API while still applying the same protocol sanity checks as before.

Gigabit Ethernet response times

While the theoretical throughput has been increased times ten, the same does not apply to the reaction/response time. With Fast-Ethernet (100Mbit), the signal transferred on the cable is actually a 125MHz signal transferred via one cable-pair for each direction. This means that half of the cable pairs of a regular Cat 5e cable are not used for Fast-Ethernet.

A standard Cat 5e cable is standardized for up to 125MHz and is still true even for Gigabit usage. With Gigabit Ethernet now all cable pairs are used and the signal on the cable is modulated differently, allowing to still use a standard Cat 5e cable that can be used for Fast-Ethernet and Gigabit Ethernet, depending on what both sides agree on using, negotiated by a low level link protocol on the cable.

As Fast-Ethernet and Gigabit Ethernet still share a lot of protocol similarities, not all of them scale linear compared to the plain data throughput that can be achieved.

Gigabit PING response time

For measuring the Gigabit Ethernet response time for PING and a 1 byte TCP echo the emPower-Zynq has been used. The setup remains the same with measuring the Rx hardware latency, application response time and Tx hardware latency.

The table below shows the results for a 64 byte PING between a 168MHz Cortex-M4F (emPower) using Fast-Ethernet and a Cortex-A (emPower-Zynq) running at 666MHz, fully cached and Gigabit Ethernet:

| Platform | Rx hardware latency | Application response time | Tx hardware latency |

|---|---|---|---|

| emPower @168MHz, Fast-Ethernet | ~3.641us | ~26.449us | ~1.456us |

| emPower-Zynq @666MHz, Gigabit Ethernet | ~1.699us | ~7.819us | ~1.083us |

The emPower-Zynq running @666MHz is roughly 4 times faster than the emPower (K66) running @168MHz. This results in approx. a quarter of the application response time and therefore matches the expected scaling based on plain processing power. Variations exist based on different timings of components like memory, cache being present and used, a different Ethernet IP and emNet NI driver, etc., therefore not scaling exactly linear.

Gigabit TCP response time

The response time to echo back a 1 byte TCP payload using Gigabit takes ~21.100us using BSD Socket API.

This can be reduced to ~14.949us by using the emNet TCP zero-copy API to receive TCP data.

These times are again roughly a quarter of the application response time measured for the emPower (K66), therefore scaling based on plain processing power as expected.